My friend, John R. Butcher, has a new post over at Cranky Taxpayer, analyzing 20 years of VBOE failure. Check it out!

Petersburg: Paradigm of VBOE Fecklessness, 2024 Update

Despite twenty years of “supervision” by the Board and Department of Education, the Petersburg schools marinate in failure.

Documents on the VBOE Web pages show the following events as to Petersburg:

“MOU” is bureaucratese for “Memorandum of Understanding,” which in turn is an edict to which the Board can point in order to claim it is doing something about lousy schools. The MOU process demands a Corrective Action Plan (“CAP”) that sets forth “specific actions and a schedule designed to ensure that schools within [the affected] school division meet the standards established by the Board.”

In the case of Petersburg, the MOU/CAP process has produced grand opportunities for paper-pushing but, as the 2024 data indicate, no halt to ongoing decline in the performance of the city’s schools.

Notes:

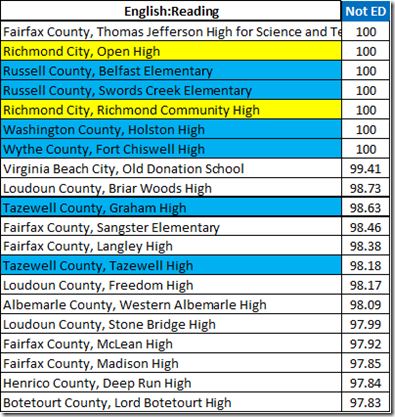

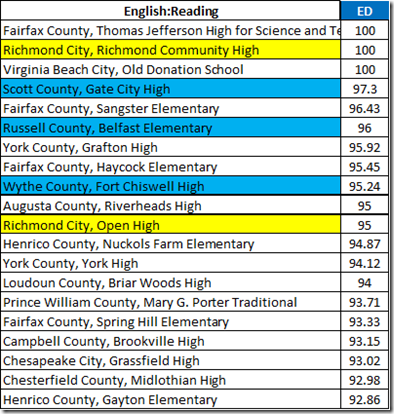

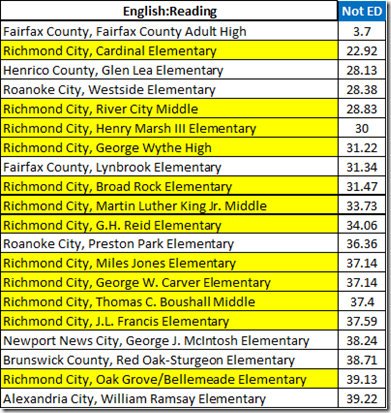

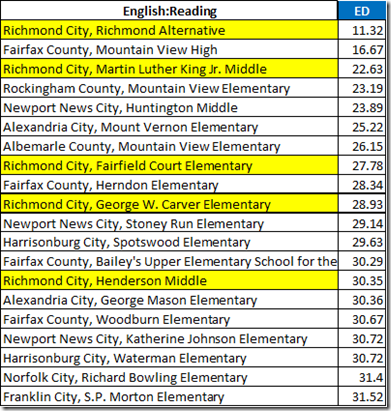

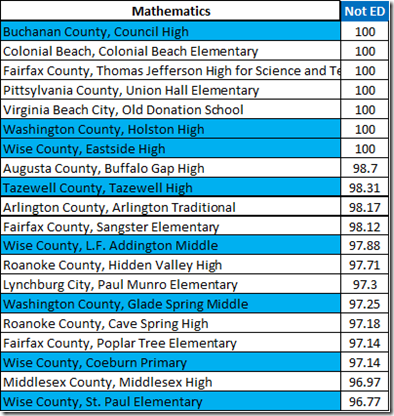

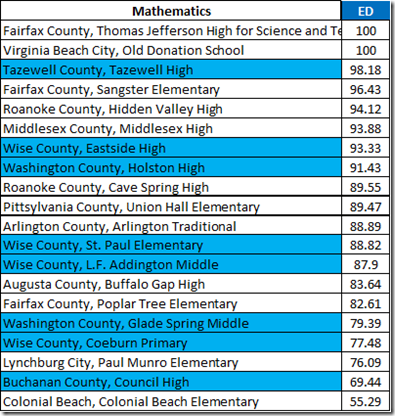

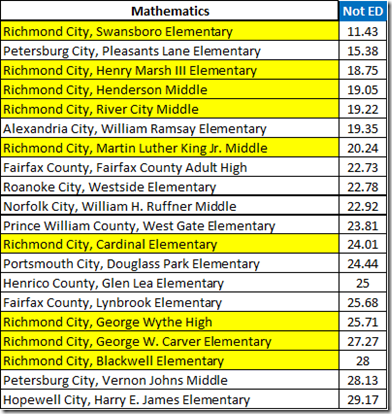

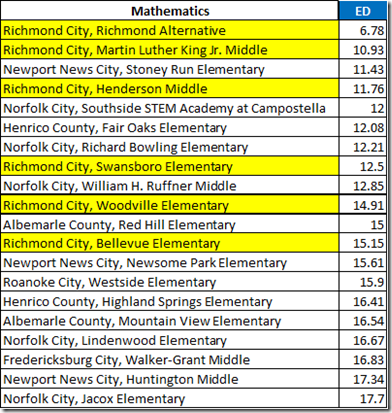

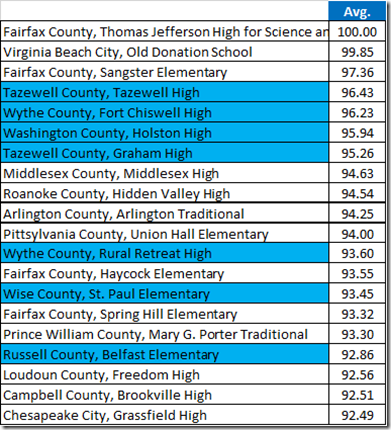

Virginia’s economically disadvantaged (“ED”) students underperform their more affluent peers (“Not ED”) by ca. fifteen to twenty percent, depending on the subject. The SOL average pass rate thus is lowered for the divisions with larger ED populations. To avoid the biased overall average, let’s look at the underlying averages for the ED and Not ED groups.

The 2022 and later Reading results were boosted, no telling how much, by the adoption of a “less rigorous” grading scheme. There was no SOL testing in 2020. Participation in the 2021 testing was voluntary. So, the ‘22 and later data are the only post-pandemic numbers with a claim to measuring anything beyond individual performance. And, as to the reading tests, that claim is adulterated.

Here, then, are the recent Reading pass rates for Petersburg and the state.

Petersburg’s 2022-2024 Not ED pass rates remained below the state rate for ED students. As well, both of the 2024 Petersburg rates continue a failure to even begin recovering from the effects of the pandemic.

46.5% of Petersburg’s Not ED students and 58.8% of the ED flunked the 2024 Reading tests.

The Math tests enjoyed their scoring boost in 2019, so the 2022 and later numbers are a more accurate measure of the pandemic effect than are the reading data. Notice the larger decreases.

At Petersburg, the 2023 increases were partially canceled by decreases in 2024. The ‘24 failure rates were 56.7% for Not ED, 67.4 for ED.

You read that right: Over half of Petersburg’s more affluent students and over 2/3 of the ED flunked the Math tests in 2024.

The 2024 Writing data for Petersburg are hard to believe, but they are what VDOE reported. If those numbers are valid, they are a ray of hope in a dungeon of despair.

As to History and Social Science, Petersburg showed nice improvement from ‘22 to ‘23 and mixed, albeit still dismal, results for 24.

Finally, the Science tests.

Despite twenty years of “supervision” from the Board and Department of Education, Petersburg wallows in failure.

Va. Code § 22.1-8 provides: “The general supervision of the public school system shall be vested in the Board of Education.”

Va. Code § 22.1-253.13:8 provides:

The Board of Education shall have authority to seek school division compliance with the foregoing Standards of Quality. When the Board of Education determines that a school division has failed or refused, and continues to fail or refuse, to comply with any such Standard, the Board may petition the circuit court having jurisdiction in the school division to mandate or otherwise enforce compliance with such standard, including the development or implementation of any required corrective action plan that a local school board has failed or refused to develop or implement in a timely manner.

The Board has yet to sue any school division, even Petersburg, under the authority of § 22.1-253.13:8. Indeed, any such suit would fail: To get the injunction, the Board would have to tell the judge what Petersburg (or Richmond or . . .) could do to meet the standards. The Board manifestly does not know what that might be.

So we are left with Petersburg’s schools harming their students and an incompetent state agency charged with improving the situation.

Postscript:

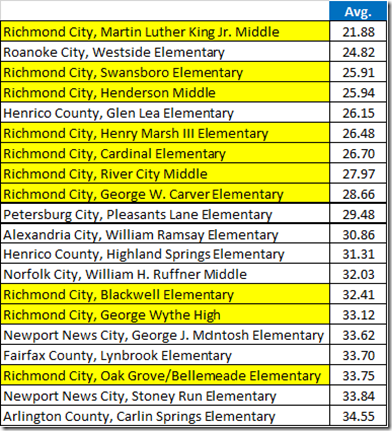

The traditional cheer for the (also awful) Richmond schools has been, “We beat Petersburg.”

In the post-COVID period, Petersburg is working to provide recent evidence for that claim, as if any self-respecting adult would make it.